Prompt engineering is more than just mastering AI — it’s about transforming the way you think, work, and create. Unlock your potential with the Certified Prompt Engineering Expert (CPEE)™ program.

- AI & ChatGPT

James Howell

- on March 24, 2023

A Complete Introduction to Prompt Engineering

Artificial intelligence, or AI, is an emerging technology that almost everyone is talking about. AI can transform technology and its uses altogether by enabling machines with sentient capabilities like humans. For example, you must have heard discussions about AI taking the jobs of people in the coming years. Amidst the praises and criticisms for AI, prompt engineering or prompting has arrived as a disruptive concept in artificial intelligence uses.

The most promising example of prompting, which has gained formidable traction in recent times, is ChatGPT. It takes text-based inputs and offers answers to the questions exactly like an expert in the concerned domain. As the use cases of prompting gain momentum, they are more likely to be embedded in the business landscape.

The jargon in the domain of AI could create difficulties in understanding a prompt engineering tutorial and its significance. Beginners can have trouble navigating through AI jargon and other technical aspects related to prompting. The following post serves as a detailed introduction to prompting and the basics of a prompt. In addition, the post would also cover the important principles and pillars of prompting alongside its fundamental uses.

Definition of Prompt Engineering

The first thing you would come across in discussions about prompting would reflect on its definition. At the same time, you must find answers to “What is prompt engineering in AI?” for a better understanding of the term. Prompting relies on using prompts to obtain required results from AI tools such as NLP services.

You can have a prompt as a simple statement or a block of programming logic and even a string of words. The different methods for deploying prompts help in drawing unique responses. The working of prompting in AI is similar to prompting an individual to answer a question or start an essay.

In the same way, you can utilize prompts for teaching AI models to provide desired results for specific tasks. For example, an AI model could take a specific prompt and create an essay on the basis of inputs, just like a human writer would achieve the task. The definition of prompt engineering from a technical perspective focuses on how it involves the design and development of prompts.

The prompts serve as inputs for AI models and help in training the models to address specific tasks. Engineering of prompts emphasizes on the selection of appropriate data type alongside formatting, which helps the model in understanding and using the prompt for learning. Prompting focuses on the creation of high-quality training data, which can help the AI model in making accurate decisions and predictions.

Become a master of generative AI applications by developing expert-level skills in prompt engineering with Prompt Engineer Career Path

Examples of Prompt Engineering

The fundamentals of prompting would be incomplete without examples. You can find popular prompt engineering examples in the cases of language models such as GPT-2 and GPT-3. The applications of multi-tasking prompting in 2021 with different NLP datasets delivered a good performance.

Language models are capable of drawing reasoning representations with better accuracy through instances that rely on thought progression. Zero-shot learning has proved as an effective method for improving effectiveness of language models for addressing multi-step reasoning use cases.

Other examples of prompt engineering gained dominance in 2022 with the launch of new machine-learning models. Some of the latest machine learning models, such as Stable Diffusion and DALL-E, have served as the foundations of text-to-image prompting. As the name implies, the new machine learning models can use prompting to convert text prompts into visuals.

Take your first step towards learning about artificial intelligence with all the definitions of important AI concepts and terms with simple AI Flashcards

What are Prompts?

The most common means of interaction between users and AI models is text or prompts. As a matter of fact, the answers to “How does prompt engineering work?” could become more clear with an in-depth understanding of prompts. The basic definition of prompt paint it as a broad instruction you offer to the AI model.

For example, Stable Diffusion and DALL-E need prompts in the form of detailed descriptions of desired visual output. On the other hand, prompts in the case of large language models or LLMs such as ChatGPT and GPT-3 could be simple queries. At the same time, LLMs could also rely on complex problems and instructions with multiple facts in the prompt. You can also use random statements such as “Tell me the description” for a specific prompt.

The most important highlight in prompt engineering examples would draw attention to the important components of a prompt. At the high level, you could identify four distinct components of a prompt, such as instructions, questions, input data, and examples. The successful design of prompts would depend on an effective combination of these elements of a prompt.

Principles of Prompt Engineering

The fundamental aspect of prompting, i.e., prompts, showcases the simplicity of training AI models to achieve desired tasks. Now, you must be curious about the methods for designing prompts. Here are the important principles which can guide the development of effective prompts for AI models.

-

Generation of Useful Output

The fundamental highlight in a prompt engineering tutorial would emphasize guiding AI models for generating useful outputs. Imagine a scenario where you want a simmer of an article. In this case, you can use a prompt for training a large language model with sufficient data to obtain a summary. What would you include in the prompt for this example? The prompt would include the input text and the description of the task, i.e., how you want the summary.

-

Work on Different Prompt Variations for Best Results

A detailed understanding of “what is prompt engineering in AI?” would also draw attention to the need for trying multiple prompt designs. Slight variations in the prompts could help in generating significantly different outputs. As a matter of fact, small changes in the design of the same prompt could generate multiple distinct outputs.

AI models learn that different formulations of a prompt are applicable for different contexts and purposes. For example, the summarization task could not rely on simple prompts such as ‘In summary.’ On the other hand, variations such as “The main objective of the article is that” or “Summary of the article in plain language is.”

In addition, prompt engineering examples also point to the use of likelihood features. It can help in finding out whether the model has difficulties in understanding specific words, structures, or phrases. At the same time, you must also remember that the likelihood of prompts would be significantly higher in the initial stages of the sequence. The model could assign a low likelihood to a new prompt and familiarizes with it over multiple iterations of the model. The likelihood feature also helps prompt engineers to identify spelling or punctuation issues in the prompt.

Excited to learn the fundamentals of AI applications in business? Enroll now in AI For Business Course

-

Definition of Task and General Setting

One of the basic things about defining tasks would point to the inclusion of additional components in task description. The applications of prompt engineering can generate desired results by providing adequate context to the model. In the case of the summarization use cases, you can provide a description of the task for summarization in detail. The critical components in prompts, such as input and output indicators, help in defining the desired task clearly to the model. A detailed description of prompt components could help in situations where you include multiple examples in a prompt.

-

Show the Desired Output to the Model

Examples are a crucial highlight in answers to “How does prompt engineering work?” and serve as effective instruments for achieving desired output. The examples showcase the type of output you want from the model. You can leverage the few-shot learning model to offer specific examples of the types of output you want.

Want to understand the importance of ethics in AI, ethical frameworks, principles, and challenges? Enroll now in Ethics Of Artificial Intelligence (AI) Course!

Important Pillars of Prompt Engineering

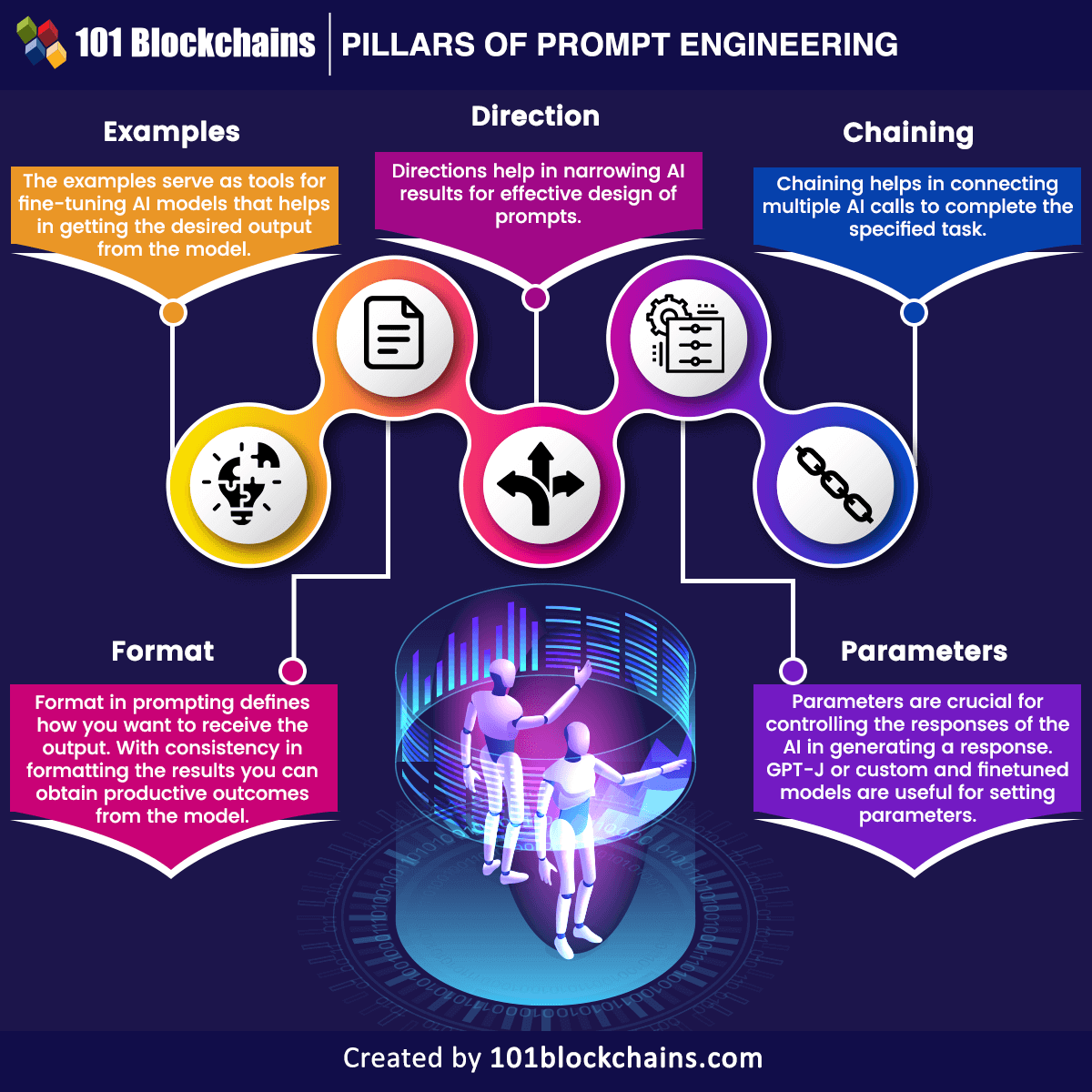

The next crucial aspect of a prompt engineering tutorial would draw attention to the important components of prompting. You can find five distinct components in the development of prompts, such as the following.

The five pillars of prompting are important requirements for creating effective prompts which deliver desired results. Let us find out more about the implications of these pillars in the design of prompts.

-

Examples

The foremost highlight of prompt engineering use cases would draw examples to the limelight. Examples are the representation of the output you expect from the model. How can GPT-3 achieve good results? The capability of GPT-3 for zero-shot learning help in obtaining answers without examples.

On the other hand, adding examples to the equation could introduce radical improvements in response quality. However, it is important to practice caution while offering examples to AI models. For example, an excess of similar examples could reduce the creativity of the model in delivery outputs. On the contrary, the examples should serve as tools for fine-tuning AI models.

-

Direction

The second aspect of the effective design of prompts refers to directions. While examples help in generating desired outputs, you have to provide directions to the AI model. Directions are an important highlight of “How does prompt engineering work?” as they help in narrowing AI results. The use of directions for prompting AI models could help in obtaining the exact results you want from the model.

-

Parameters

Parameters are crucial for changing the type of answer generated by AI models. Majority of AI models feature a specific degree of flexibility. In the case of GPT-3, the most noticeable parameter is the “temperature,” which denotes the degree of randomness in generating a response. Large language models in prompt engineering examples could select the next word that would fit in a sentence, albeit without any uniformity.

With the help of ‘temperature,’ you can control the responses of the AI. For example, a higher temperature would ensure creative answers, while a lower temperature invokes generic responses. Open-source models such as GPT-J or custom and finetuned models are useful for setting parameters.

-

Format

As the name implies, the format in prompting defines how you want to receive the output. Experimental use of AI, such as asking questions to ChatGPT, does not emphasize the structure of answers by the model. On the contrary, the future of prompting would call for integrating AI into production tools, which need structured inputs. Without consistency in formatting the results, you are less likely to obtain productive outcomes from the model. The formatting of prompting outputs would depend significantly on the design of the prompt and the ending of the prompt.

-

Chaining

The final component in prompting is chaining, which helps in connecting multiple AI calls to complete the specified task. A review of responses to “What is prompt engineering in AI?” would showcase the importance of chaining. The limit on prompt length calls for breaking tasks into multiple prompts. One of the popular chaining tools is Langchain, which can help in stringing multiple actions together alongside ensuring the organization of prompts.

Want to develop the skill in ChatGPT to familiarize yourself with the AI language model? Enroll now in the ChatGPT Fundamentals Course!

Use Cases of Prompt Engineering

The final highlight in a prompt engineering tutorial would point at the use cases of prompting. After learning the fundamentals of prompting, you must have doubts about the basic uses of prompts. Here are the top use cases of prompt engineering.

- Creation of text summaries for articles or essays.

- Extracting information from massive blocks of text.

- Classification of text.

- Specification of intent, identity and behavior of AI systems.

- Code generation tasks.

- Reasoning tasks.

The advanced uses of prompting include few-shot learning, zero-shot learning and chain-of-thought prompting. You can also use prompting in large language models to incorporate information required for drawing accurate answers. Another advanced technique for prompting refers to self-consistency, which can sample different unique reasoning paths and use the responses for identifying consistent responses.

Identify new ways to leverage the full potential of generative AI in business use cases and become an expert in generative AI technologies with Generative AI Skill Path

Conclusion

The detailed guide to prompt engineering provided insights into the fundamentals of prompts and their functionalities for AI models. Artificial Intelligence models are gradually expanding in terms of their use cases with futuristic applications. Prompts could help in guiding AI models just like instructions for a human to achieve specific tasks. The prompt engineering examples, such as ChatGPT and GPT-3, offer insights into how prompting can revolutionize AI.

At the same time, it is also important to know about the recommended principles of prompting. The pillars of prompting outlined in the discussion also provide a comprehensive impression of how you can use prompting. Learn more about prompt engineering and explore the potential of AI for changing the future right now.